- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

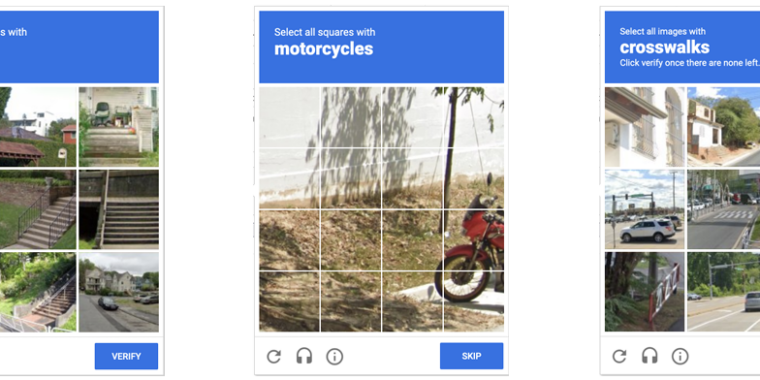

Anyone who has been surfing the web for a while is probably used to clicking through a CAPTCHA grid of street images, identifying everyday objects to prove that they’re a human and not an automated bot. Now, though, new research claims that locally run bots using specially trained image-recognition models can match human-level performance in this style of CAPTCHA, achieving a 100 percent success rate despite being decidedly not human.

ETH Zurich PhD student Andreas Plesner and his colleagues’ new research, available as a pre-print paper, focuses on Google’s ReCAPTCHA v2, which challenges users to identify which street images in a grid contain items like bicycles, crosswalks, mountains, stairs, or traffic lights. Google began phasing that system out years ago in favor of an “invisible” reCAPTCHA v3 that analyzes user interactions rather than offering an explicit challenge.

Despite this, the older reCAPTCHA v2 is still used by millions of websites. And even sites that use the updated reCAPTCHA v3 will sometimes use reCAPTCHA v2 as a fallback when the updated system gives a user a low “human” confidence rating.

the new ones suck so fucking much though

If I see the newer ones pop up at all I just skip what ever the task is that was requiring me to bother with it.

i love when websites (twitter is a really bad example) hit me with like 8 captchas, and then if i get my username/password wrong i have to do another 8. It’s just so obviously gaming for training data on shit lmao.

What is it actually training? Google owns captcha right?

i have no clue, but i would assume it’s native to twitter if they’re pushing it that hard, either that or someone is paying a lot of money for that captcha access lol.